Alain Damasio is no amateur. He is the author of some of the greatest French sci-fi epics, including The Windwalkers, which has sold half a million copies. Most of his work drops us into dystopian universes equipped with ultra-surveillance technologies. One might expect him to be wary of AI.

Yet, when asked about his use of the technology in his work, he concedes:

“I use Claude. For creating sci-fi/fantasy universes, its capabilities are extraordinary.”

“So what’s the difference between you and the AI?” the journalist shoots back.

“Honestly, the question is becoming increasingly scary,” Damasio replies.

It’s not just in France. The phenomenon is accelerating everywhere. Katy Perry posts a screenshot of her Claude Pro subscription on X. Snoop Dogg creates his latest music video entirely with AI. Logan Paul stars in an ultra-realistic TV pilot, created by a 7-person team in one week, bypassing the need to invest millions in special effects.

Renowned directors—some of whom have publicly denounced the technology—are quietly turning to AI video startups like ours to slash production budgets as quickly as possible. Seedance 2.0, the latest Chinese video AI, left one of them dumbfounded:

“It’s perfect,” he wrote to me. “I couldn’t sleep. Can you show me how to use it?”

In software, too, the revolution is accelerating. Jack Dorsey, the founder of Block, lays off 40% of his employees (4,000 people), explaining that integrating AI into their processes allowed them to double their profit per employee in just a few months.

The founder of Cursor, the most widely used AI coding software, writes on X that within a year, his engineers will do nothing but orchestrate agents.

One of the top engineers at Anthropic, the giant behind Claude, resigns, penning a public letter where he explains that “the world is in peril” and that “our wisdom must grow as fast as our technological capabilities, at the risk of paying a heavy price.”

AI is here to stay. Yann LeCun’s grotesque “stochastic parrot” analogy seems almost comical today. If AI is just a parrot, the flapping of its wings is about to trigger a tsunami.

The Farrier Syndrome

If AI is moving so fast, why do some experts say we shouldn’t panic? Why does your lawyer or doctor friend explain that he tried ChatGPT and it will never replace him?

There are two main reasons: ego, which I’ll discuss further down, and the blindness of experts.

“Silicon Valley is wrong to think it will be safe. I think the math people will be replaced faster than the word people.” — Peter Thiel

In his book Superforecasting, Philip Tetlock demonstrates that predicting the future requires specific skills—the most important being a hyper-generalist. When you are too specialized, you tend to see your discipline as a silo and neglect the impact of other fields on your own.

If farriers had had to predict the future, they would have imagined horses with stronger hooves.

To make important decisions, there is therefore often no worse idea than asking experts—especially self-proclaimed ones. Unfortunately, they are the most heavily covered by the media because they soothe audiences. And that is the trap politicians are falling into today.

Because Luc Julia, for one, is certain: AI is just a slightly wonky text generator. And despite certain exaggerations on his resume regarding his involvement in inventing Apple’s Siri, this good-natured grandfather tours assemblies and hearings to reassure swooning, pot-bellied senators.

Never mind that the examples he cites date back to GPT-3, released in 2023, and that he gives the impression of not having touched a computer in over 3 years; to him, AI is just a text generator that hallucinates.

By telling decision-makers exactly what they want to hear, he blinds them to the mountain looming just outside the clouds—and they are the ones flying the plane.

Wrongly convinced they are protected by the State, the passengers (our fellow citizens) forget to demand that politicians tackle this issue.

As for our aging institutions—education, the military, the administration, healthcare—they are in no state to prepare for the most violent paradigm shift in their history.

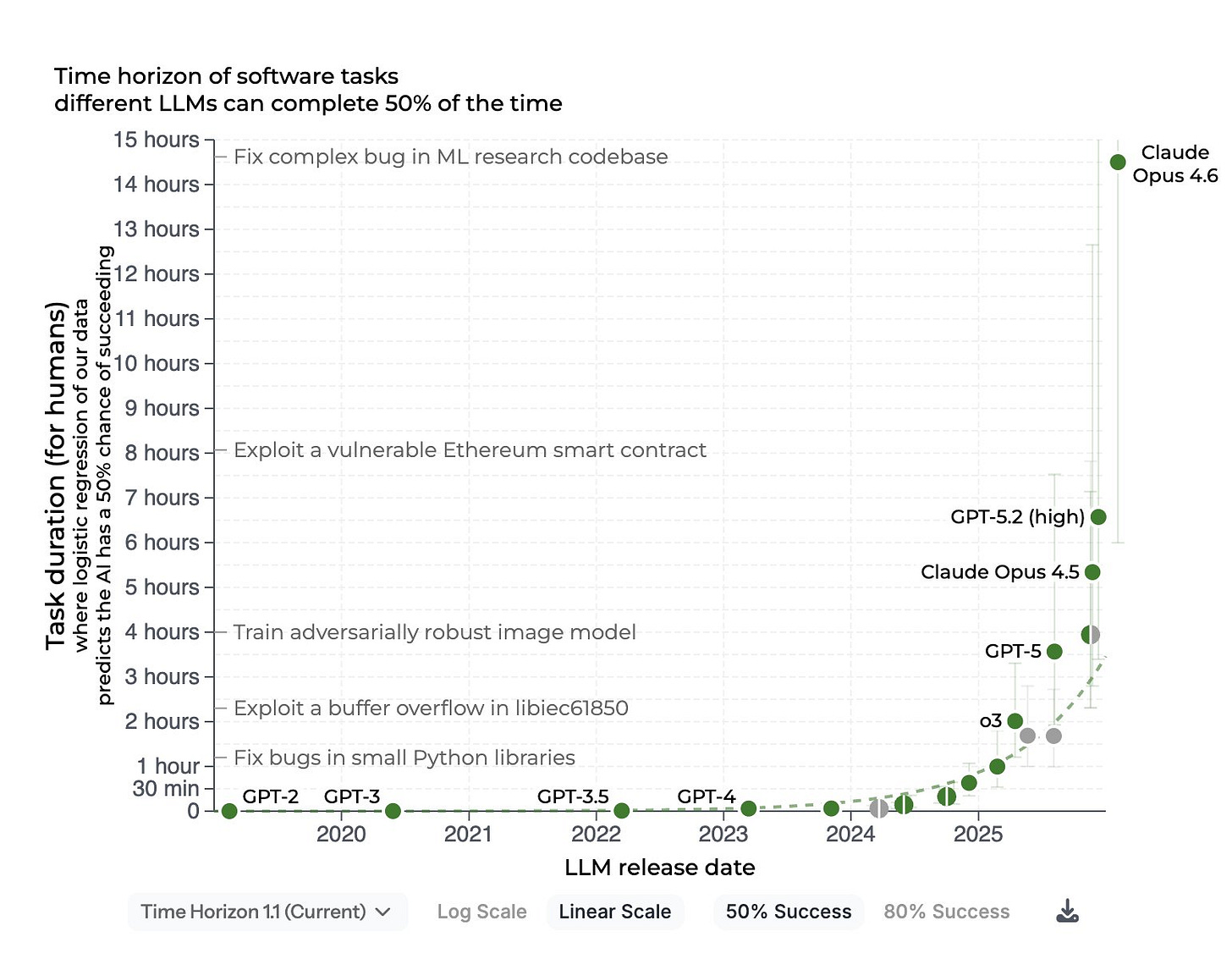

Because while the Senate summons storytellers, the technology is going exponential.

The 22nd Fold

AI is revolutionizing the puzzle pieces that underpin our world: mathematics, physics, code, etc. Independently, each seems more efficient, but nothing more. It’s only when they’re clicking that the magic truly happens.

Millions of researchers are already equipping themselves with agents that allow them to test hypotheses, cross-reference data with other experts, and gain perspective on their blind spots. In laboratories, as in startups and agencies, time is compressing. What used to take a month now takes a day, and will soon take an hour, then a second.

And when time compresses across multiple interconnected fields, progression isn’t linear; it’s exponential.

Humans, bound by their survival instincts, are not equipped to anticipate major events, Nassim Taleb’s famous black swans.

In 1975, researchers Wagenaar and Sagaria showed that even when people are presented with explicit graphs, they systematically underestimate future progression, whether it bodes well or ill.

In 1914, surveyed citizens thought there would never be another war. In 2020, street interviews showed smiling people, convinced they would never be locked down—before rushing to buy toilet paper two days later.

By the way, do you know how many times you’d have to fold this sheet of toilet paper for it to touch the moon? 42 times. By the 22nd fold, it would be as tall as the Statue of Liberty. That is an exponential.

Lulled by reassuring speeches, the public imagines that the Statue of Liberty is an insurmountable peak for AI. They can’t see that we’re only a few folds away from the Moon.

The Orwellian Reflex

If the crash is inevitable, our pilots’ first idea will be to pull the emergency brake: regulation. That is the Orwellian reflex.

Ban AI, throttle its capabilities, sprinkle in some surveillance technologies, and the problem is solved.

We must remain vigilant before giving in to these siren songs, keeping a clear North Star in mind: what kind of world do we not want to live in?

Do you want to scan your retina to enter a building or post on TikTok? Have a social credit score that drops when you jaywalk and prevents you from leaving the country if you do it too much?

Hyper-regulation is not just a critical danger (regardless of your political leanings, imagine your most feared political party wielding that power) ; it is also a pipe dream in today's geopolitical climate.

AI superpowers are currently engaged in a cold war where technology is the primary weapon. Want to generate a deepfake of Trump? Any open-source Chinese software will let you do it.

Neutering Western software will only aggravate the situation, as it will handicap the economic power that might one day have to pay a universal basic income to its citizens.

Plunging into an Orwellian world would not serve our interests. Above all, regulating to the extreme would mean missing out on the greatest scientific and technological revolution of our species, one that will make the Industrial Revolution and the advent of the internet sound trivial.

Let us therefore contemplate the other side of the coin.

And then all at once

In momento, in ictu oculi, in novissima tuba

(In a moment, in the blink of an eye, at the last trumpet) —

1 Corinthians 15:52

Why on earth continue to develop a technology that carries such societal risks?

Because despite forcing us to confront harsh realities, developing AI aligns perfectly with our nature. It is the technology that will allow us to solve the most important problems we have ever tackled.

And Man, in his deepest nature, is nothing but a problem-solving machine, even if it means inventing them, as Dostoevsky captured in his Notes from Underground:

“Shower him with all earthly blessings, drown him in happiness over his head [...] well, Man, out of sheer ingratitude, out of sheer mockery, will play a nasty trick on you.”

So, for lack of an alternative, let us look forward.

My engineers are 5 times more productive than they were just a few months ago. AI writes 100% of our code. I prepare my meetings with Jarvis, my partner’s AI agent who roughs out the topics for us before our meetings.

Jarvis is a “ClawdBot,” designed by an engineer whose startup—where he was the sole employee—was bought for a billion dollars by OpenAI. This bot can draft documents, review our team’s performance, fix bugs, and even sort emails.

Soon, my assistant Alfred will chat with Jarvis before passing the relevant information along to us. He will answer the phone when the number is unknown and decide whether to patch the call through, remind me of my appointments, and back me up on the company’s strategic decisions.

These assistants owe their existence to the lightning-fast progress in algorithm efficiency. The production cost of a million tokens has dropped a hundredfold in two years. The acceleration is inevitable: AI will be a formidable catalyst in key areas of our daily lives. Health, loneliness, transportation, and even war.

Your friend who had a little too much to drink tonight and is driving home with his wife won’t crash into an 18-wheeler, because his car will drive him home safely on its own.

Rather than looking for ways to harm himself, a bullied teen crying in his room will be able to count on his faithful AI friend to find the right words and keep him going.

You will become the hero of your favorite show or video game, generated in real-time and fully adapted to your tastes. And you will cry for the first time watching a movie entirely generated by AI, while your partner smirks at you.

But entertainment and daily life are just a warm-up. The real earthquake will play out on our biology.

Thanks to neural implants, the company Neuralink has restored sight to monkeys: humans are next. The device will also soon, hopefully, make quadriplegics walk again, and much more.

Breakthroughs in the science of rejuvenation in mice suggest that we may soon become Benjamin Buttons—only better controlled.

And if we fail at this Herculean task, robots with infinite patience will care for the elderly in nursing homes.

When your loved one is diagnosed with metastasized cancer, a simple pill or injection will be enough for them to keep their ski trip with you the following week.

Your smartwatch will create a complete nutritional and fitness program for you, and might perhaps even cure depression. In the meantime, the magic pill Ozempic, allowing for weight loss, could already save $500 million in aviation fuel by 2026.

Rather than heading toward a Wall-E-style future of obese citizens in hoverchairs, we are on the verge of becoming physically and mentally healthier than ever before.

And with the base being built on the Moon, this future could be interstellar sooner than expected. Nietzsche’s Übermensch conquering the stars.

Ad Astra, per Aspera

We’ll have it, this world we fantasized about while reading Asimov or watching Minority Report. We will know it in our lifetime, for better or for worse.

But first, we must make peace with our ego.

Sic transit gloria humanis

When a new pope was inaugurated in the 13th century, it was customary for a monk to stand before him and announce: “Sancte Pater, sic transit gloria mundi” (Holy Father, thus passes the glory of the world).

Just as the Romans expressed it with Memento Mori and Persian storytellers with “This too shall pass”, these phrases remind us that none of us is greater than our biology—and none of us will leave indelible marks in the great fresco of time.

Empires that succeed one another, the death of a tyrant or a saint, a pantheon in their image; all of this will one day be dust. The impermanence of everything, masterfully captured by Percy Shelley in his poem Ozymandias:

And on the pedestal, these words appear:

‘My name is Ozymandias, King of Kings;

Look on my Works, ye Mighty, and despair!’

Nothing beside remains.

Round the decay Of that colossal Wreck, boundless and bare

The lone and level sands stretch far away.

Until now, we have only ever measured ourselves against our ancestors—their hubris, their legacies. Today, AI forces us to contemplate a terrifying question: perhaps humanity is not the ultimate culmination of nature. Perhaps we are, as Elon Musk speculated, merely the “biological bootloader for digital superintelligence.”

Spending a decade studying only to be effortlessly bested by a machine is a bitter pill to swallow. Your friend with a wall full of degrees simply cannot stomach the idea that a single text prompt could replace thousands of hours of cramming and the stress that turned his hair gray.

But that is nothing compared to someone who spent 50,000 hours developing a skill to become the best in the world in his field, only to be annihilated by a program. I am, of course, talking about Garry Kasparov’s defeat against Deep Blue in 1997. He was so shocked that he cried foul, convinced that humans had secretly played the moves instead of the machine. But who could have beaten him?

At the time, Garry thought AI would still need us in his discipline, and he was partially right. In the years following Deep Blue’s feat, an AI assisted by a human still beat the software alone at chess. But from 2002 onwards, the human became a liability for his digital teammate.

Will Alain Damasio soon be a liability for an AI? Katy Perry? Elon Musk? Me? You?

In his interview, Damasio speaks of the 4th narcissistic wound:

We are not the center of the universe (Copernicus)

We are nothing but a biological animal (Darwin)

We are not the masters of our unconscious (Freud)

We are not the culmination of intelligence (Sam Altman?)

This wound marks the crossing of a threshold: it is likely that soon the superiority of Man with a capital M will be relegated to a distinct past that we will look upon with presumption, just as we do today with bygone civilizations. A necessary, but henceforth dusty, page of history.

Sic transit gloria humanis.

Thus passes the glory of Man.